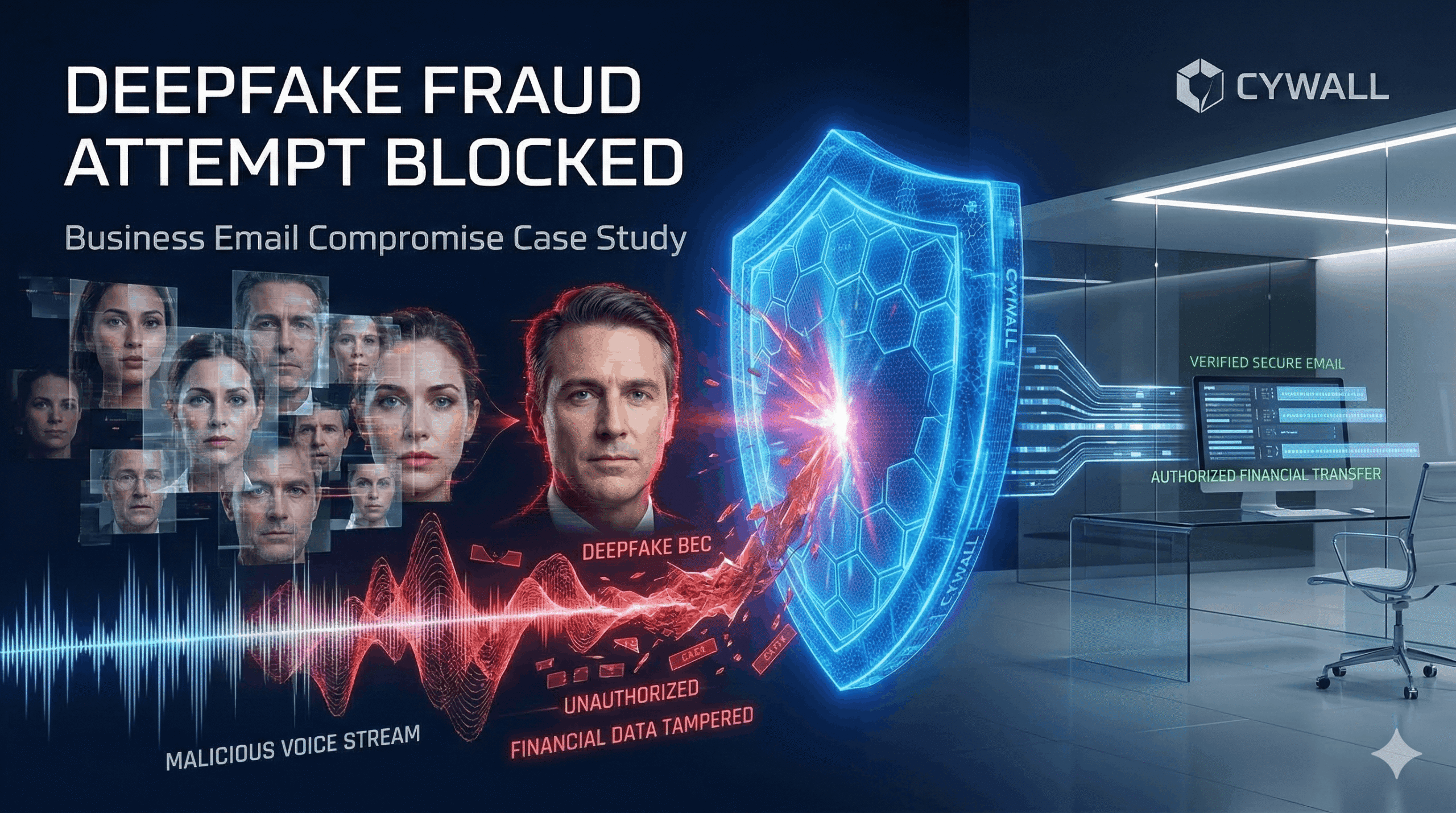

Vertex Capital was targeted by a sophisticated “Business Email Compromise” (BEC) attempt where attackers used AI-generated voice cloning (Deepfakes) to impersonate the CFO. While the immediate fraud was caught, the firm realized their traditional email filters and basic “once-a-year” training were obsolete against generative AI threats. Cywall was hired to transform their human firewall and technical email defenses.

The firm faced a new breed of "identity-less" attacks that bypassed traditional security:

Cywall implemented a Human-Centric Identity Protection layer:

No. Because these emails often contain no malicious links or attachments—only "socially engineered" text—traditional antivirus tools are blind to them. Our solution looks at behavioral patterns instead.

We implement "Challenge-Response" protocols. If an executive receives an unusual verbal request, they are trained to ask a pre-arranged non-digital secret question that an AI would not have in its data set.

Not at all. The AI analyzes the metadata and linguistic patterns of the communication to detect fraud; it does not "read" or store the personal content of private conversations.

After 4 months of Cywall's adaptive simulations, the "Click-Through Rate" on phishing attempts dropped from 12% to less than 1.5%.

While Vertex Capital is a high-target client, AI-phishing is becoming "commoditized." Any business that handles wire transfers or sensitive client data is now a target and needs this level of defense.

One stop solution for your security needs, designed to protect businesses from evolving digital threats.

51 Monroe St Suite, Rockville, USA

Copyright © 2026 All Rights Reserved.